Financial data is now fast, structured and is increasingly being processed by machine. APIs deliver millions of data points in milliseconds. Assessment dashboards are automatically updated. AI systems directly query key figures and use them for screening engines, risk models and portfolio construction.

And yet there appears to be a structural weakness in many financial data systems.

Figures are provided without sufficient economic validation and without transparent origin. This is usually only visible when you look closely.

A customer recently reviewed some of the financial figures in detail as part of routine validation checks. He noticed that certain indicators, such as return on equity and return on investment, behaved in a way that was difficult to justify economically. In particular, cases with negative equity or a capital base close to zero yielded values that were mathematically correct but economically questionable.

His question was direct.

What does return on equity mean when equity is negative?

Should an extreme return on equity be published when equity approaches zero?

If both equity and profit are negative and result in a positive return on equity, is that meaningful?

And who is responsible for handling these special cases correctly?

These questions get to the heart of how a financial data infrastructure should be set up and how we at Bavest address problems: We're trying to create a real infrastructure that “thinks” for you, and not just simple, raw, “stupid” data feeds.

Key figures such as ROE, ROIC, EV over EBITDA or P/E are often treated as standardized and universally defined. In reality, however, these are constructed indicators based on multiple assumptions.

Let's take ROE as a simple example.

ROE is equal to net profit divided by average equity.

At first glance, this seems obvious. In practice, however, this is not the case.

Equity can be defined in various ways. It can include or exclude minority interests. It may relate to total capital or share capital. It can use period end values or moving averages. Accounting differs depending on legislation and standards. Even the timing of adjustments can influence the denominator.

Two systems can calculate ROE using slightly different assumptions and both be mathematically correct. The variance is not necessarily a calculation error. It is the result of embedded political decisions. Without an origin, these decisions remain invisible.

The problem becomes even more fundamental when equity becomes negative.

Let's look at the ROE formula:

ROE = net profit/ average equity

Mathematically speaking, division by a negative number is completely acceptable. The formula provides a result. However, from an economic point of view, the interpretation is becoming unreliable.

When equity is negative and net profit is positive, ROE becomes negative. What does “return on negative capital” mean in this context? The concept of capital as a basis for generating returns is collapsing.

When both equity and net profit are negative, the return on equity becomes positive. The invoice is correct. However, the economic signal is misleading. A positive indicator indicates profitability, but in reality, two negative values cancel each other out.

This is not a calculation error. It is a lack of distinction between mathematical feasibility and economic significance.

To address these issues adequately, a conceptual separation is necessary. The mathematical validity answers a question: Can the calculation be carried out without calculation errors? Economic validity answers another question: Does the resulting value represent something significant within financial analysis?

A ratio may satisfy the first condition while it does not satisfy the second. In cases with negative equity, the formula can be calculated. But the economic significance is no longer there. In cases with a denominator close to zero, the calculation works. But the result will be unstable and misleading. Recognizing this distinction is crucial in modern financial data systems.

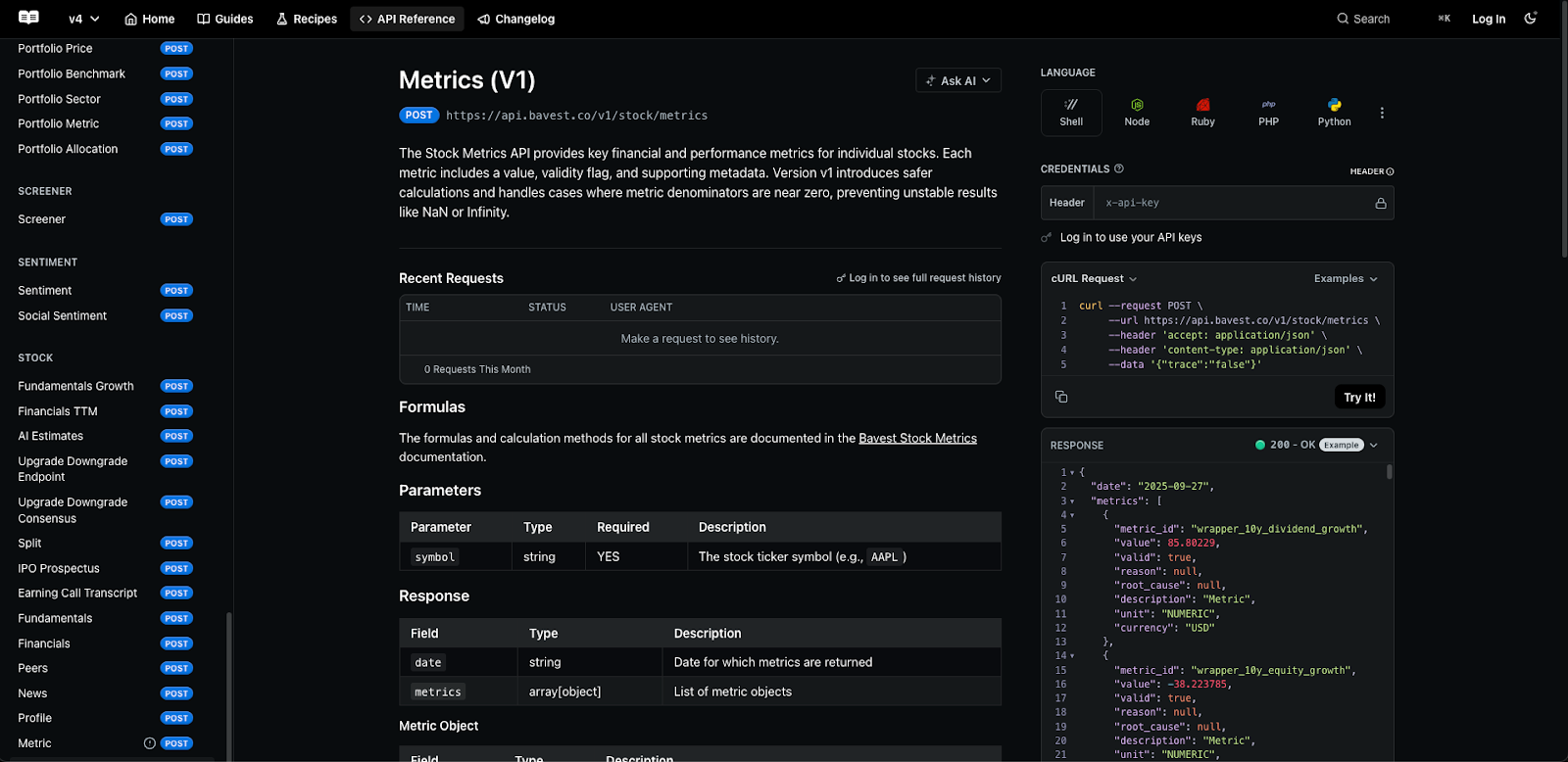

At Bavest, we have formalized economic validation as a first-class level in the API. For each derived key figure, we not only assess whether the formula can be calculated, but also whether the result can be interpreted economically under defined guidelines.

For example, let's look at return on equity (ROE).

If the equity is negative, the ratio is marked as economically invalid and is not tacitly published.

if equity < 0:

return {

"valid": False,

"reason": "EconomicallyInvalid",

"details": "DenominatorNegative"

}

If equity is positive but below a defined materiality threshold, the ratio is suppressed due to instability.

if abs(equity) < epsilon:

return {

"valid": False,

"reason": "EconomicallyInvalid",

"details": "DenominatorNearZero"

}

If both numerator and denominator are negative, the ratio is also suppressed, as the resulting sign would be economically misleading.

if equity < 0 and net_income < 0:

return {

"valid": False,

"reason": "EconomicallyInvalid",

"details": "NumeratorAndDenominatorNegative"

}

These rules are deterministic, documented, and machine-readable. They ensure that economically unstable results do not go unnoticed into downstream systems.

The customer, who had raised the original concerns, pointed to a practical impact. When distorted indicators are incorporated into valuation models, the results of the company valuation can become unreliable.

Screening systems can misclassify companies. With automated portfolio composition, companies can be overweight due to unstable capital efficiency signals. Risk models can recalibrate themselves based on distorted inputs.

In the past, a human analyst might have noticed abnormalities and manually adjusted the interpretation. AI systems and automated agents don't use intuition. They assume that structured data delivered via an API is meaningful. Economic validation at the data level reduces the hidden fragility of the model.

It creates a contract between provider and consumer: If an indicator is economically unstable, it is expressly marked as such.

Economic validation deals with the question of whether a value should be published. The origin of the data is concerned with how it came about.

When a ratio is suppressed or marked, the system can explain why.

For example, an investment committee that reviews a quarterly shift in indicators should be able to see that the return on equity was marked as invalid because equity was negative during that period. This explanation should be reproducible and consistent. Lineage converts data from opaque output into a traceable infrastructure.

Financial data systems are increasingly no longer used directly by human analysts, but by automated systems. In this environment, tacit assumptions become structural risks. The next-generation financial infrastructure must be optimized not only in terms of speed and coverage, but also in terms of transparency, determinism, and explainability.

Metrics are not atomic truths. They are abstractions based on denominators that can shift, collapse, or reverse. In this case, the data layer must not simply calculate and publish. It must evaluate and decide.

Economic validity and origin are not cosmetic features. They are architectural requirements for reliable financial systems. If a key figure can't explain itself, it shouldn't influence decisions.

In an environment where AI is increasingly interacting directly with financial APIs, transparency is not an option. It is fundamental.

At Bavest, we don't think of financial data as a collection of figures. We think of it as infrastructure.

Infrastructure means responsibility. It means determinism. It means that downstream systems, whether human analysts, fintech platforms, or autonomous AI agents, can rely on predictable behavior. When a key figure becomes economically meaningless, its tacit publication is not neutrality. It is a choice. And this decision transfers the risk to the customer.

We've decided to take a different approach.

We have integrated economic validity checks directly into the API layer. Each derived key figure is checked not only for its computational feasibility, but also for its economic interpretability. When a denominator becomes negative, when a base tends to zero, when a valuation multiplier loses meaning due to non-positive returns, the system doesn't simply calculate the value and pass it on. It marks it explicitly and deterministically.

This design decision reflects a more comprehensive philosophy.

We believe that the financial infrastructure should have the following characteristics:

In practice, this means that a customer doesn't have to develop defensive logic for every possible edge case. The logic is embedded upstream. If a key figure is economically invalid, this is clearly signaled. When a value changes, the origin explains why. When assumptions are applied, they are transparent and consistent. What sets Bavest apart isn't just coverage or latency. It is the fact that we treat derived financial figures as structured economic constructs and not as mechanical subdivisions. We separate mathematical possibilities from economic significance. And we code that distinction into the data itself.

As financial systems are increasingly automated and AI-based, the costs of tacit assumptions are increasing. A distorted figure no longer misleads just a single analyst. It can spread via screening systems, allocation engines, and autonomous agents.

It is precisely for this reason that we have integrated economic validity and origin into the basis of our platform.

Because transparency is not unique in modern financial infrastructure. It is the basic requirement.

And if a key figure cannot explain itself, it has no place in production systems.

blog